Founder of Swirrl, linked open data enthusiast, working on PublishMyData, Bill Roberts reprises his presentation at #DataImpact2016, focusing on how what we need in the data economy now is our own industrial revolution.

A couple of weeks ago, I took part in a panel discussion at #DataImpact2016 in Glasgow, an event organised by the UK Data Service. This article is loosely based on my speech.

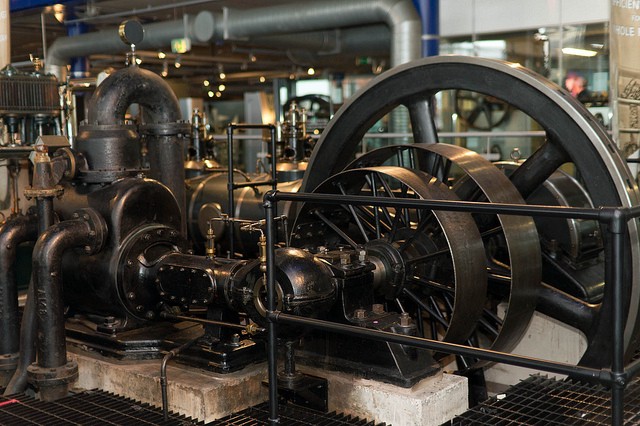

Photo credit: “Engine” by fatedsnowfox https://www.flickr.com/photos/fatedsnowfox/6621685383

The most important impact of better open data is enabling people to make better decisions. This is an increasingly common theme in public discourse about data: it’s at the core of the strategy of the UK Statistics Authority and Swirrl held a conference on it earlier this year.

But that only comes at the end of a series of connected steps: enabling better decisions with data is a whole value chain.

The value chain consists of a set of steps that most people working with data will recognise: collecting, collating and cleaning, standardising, documenting, disseminating, discovering, combining, analysing and then finally well-informed decision-making.

The striking thing about how we generate impact from data is that right now it’s mostly a cottage industry. Individuals or small groups with appropriate skills tend to do many or most steps in that chain. We’re still at the stage where a master furniture maker has to chop down his own trees.

What we need in the data economy is our own industrial revolution. That means specialisation and automation: building components that can be exploited by others and agreeing standards so that different players can interact and combine their efforts. At each stage in the value chain we need to create a market where innovation and competition can thrive.

Swirrl’s data publishing and dissemination platform (‘PublishMyData’) sits somewhere in the middle of this value chain.

Generally we work with data that has already been collected and collated. We provide tools to help data owners describe and structure their data, building new interconnections; and by providing a range of user interface, download and API options, we make it easier to use. That moves the data a couple of steps further along the spectrum from raw material to finished goods. We send it out a bit more valuable than when it came in.

Sometimes our output is used directly by the ‘deciders’; sometimes it needs more analysis, and we pass on building blocks to researchers and data scientists, who in turn add further value to the data, ‘assembling’ it for the final user.

Thinking of the process in this way helps identify the bottlenecks, helps to clarify the roles of different players and helps us take the steps towards an efficient knowledge economy that meets the needs of our society.

(I’m guessing there is already good work on this topic that I’m not aware of: I’d welcome any pointers or links! And I’m hoping this might spark some interesting discussion. Do please comment on this post via Medium, or ping me on Twitter).